Why Choose an NVIDIA L40S Universal GPU Server?

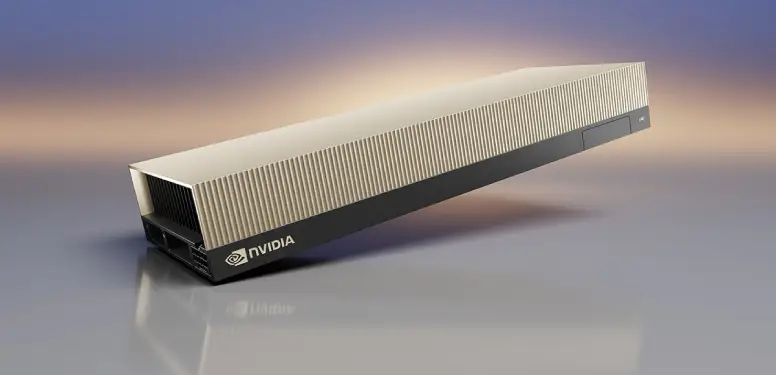

The NVIDIA L40S is the ultimate universal GPU, engineered to handle the modern data center's most versatile workloads. Built on the highly efficient

NVIDIA Ada Lovelace architecture, the L40S dedicated server bridges the gap between massive AI computation and high-fidelity visual computing, delivering breakthrough multi-workload performance.

Equipped with 48GB of GDDR6 memory, 4th-generation Tensor Cores featuring the Transformer Engine, and 3rd-generation RT Cores, the L40S excels at Large Language Model (LLM) fine-tuning, rapid AI inference, complex 3D rendering, and NVIDIA Omniverse™ enterprise deployments. GPUYard's bare-metal L40S servers offer a highly cost-effective, readily available alternative to A100/H100 setups for organizations prioritizing inference, generative media, and digital twin simulations.