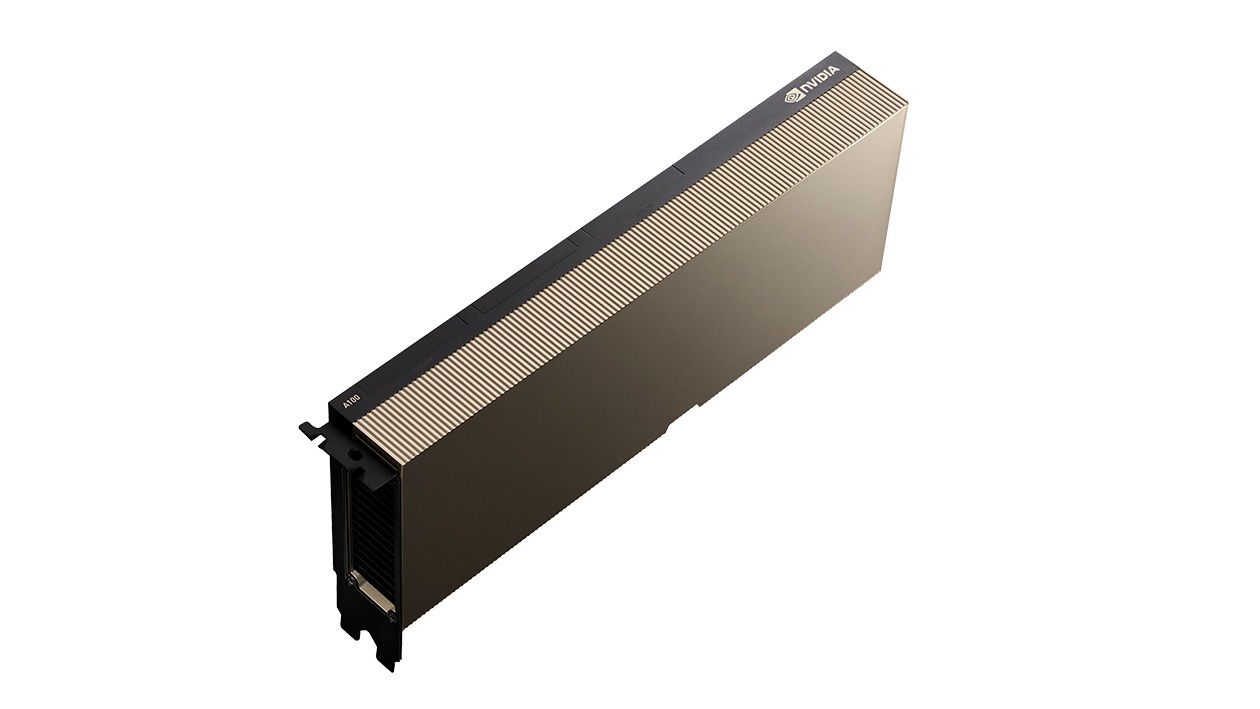

Why Choose an Nvidia a-100 GPU Dedicated Server?

Choose an NVIDIA A100 dedicated server to accelerate your most demanding AI, data analytics, and HPC workloads. Built on the powerful Ampere architecture, its third-generation Tensor Cores and up to 80 GB of high-bandwidth memory can process enormous datasets and models at unprecedented speeds. The A100's exclusive Multi-Instance GPU (MIG) feature also allows it to be partitioned to run multiple jobs at once, making it a uniquely versatile and efficient solution for modern data centers.